News

13 Dec 2018

How To – The Properties of Sound

Subscribe to CX E-News

HOW TO

The Properties of Sound

by Sidney Kidman.

Andy Stewart of The Mill writes a regular column in CX which I always look forward to reading. Earlier this year Andy wrote two articles about positioning voices in the studio mix; taking care of early reflections and reverbs. I found Andy’s advice excellent (as always), heightening my awareness of how voices (vocal and instrumental) will sit in the mix.

As we work in our DAWs (ProTools, Cubase, Ableton, Reason etc.), we use “black boxes” as tools to manipulate our raw sounds. These are the many processors such as equalizers, compressors, delays, and reverbs. Once they were literally boxes of electronics that we patched in via a patch bay, but now they exist as mostly digital algorithms that can be called up and patched over the sound we want to modify.

We’re talking about working in the binary digital realm; i.e. with zeros and ones in “words” 64 bits long, being added in a string of operations, to modify the original digital samples of the sound.

Many users will not understand the precise operations of these tools, but we use them anyway to “improve” the raw recorded or synthesized sounds. The raw recorded sounds, of, say, vocals or acoustic instruments, probably will not sound too exciting, compared to what they will become.

Decades ago, people going about their business heard the sounds of everyday life, which included all these effects occurring due to natural processes. Now many people spend much time hearing what comes into their ears through ear buds and headphones.

With the rise of recording studios, black boxes were built to mimic these natural processes in the recorded music, as it was found that the recorded music became more interesting when these natural processes were added into the recording, often in exaggerated amounts (e.g. Phil Spector’s “wall of sound” (for those of us who can remember).

As you are working enthusiastically in your home studio, I think some fundamental knowledge of the properties of audio may help you choose the most effective tools, thus saving you time. I would hope that the following information will in some way, augment Andy’s advice.

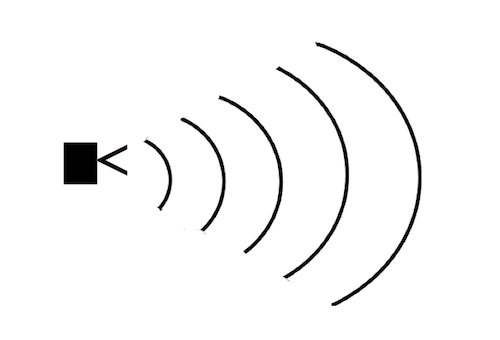

1. Sound waves emitted from speaker

Sound is propagated (travels) as pressure waves, with the energy being transferred from any vibrating surface (speaker, string, membrane) to adjacent particles in the air (or water or solid).

The balls on a snooker table offer a reasonable analogy.

Initially, the propagation is longitudinal (one dimensional), however, just as a clump of balls will go off in different directions, so does the movement of the air particles, with the sound radiating into all dimensions as it propagates.

What we call ‘sound’ is the result of particles (atoms, molecules, etc.) vibrating in a section of the frequency range, from around 20 Hz (hertz, or cycles per second), to 20 kHz. These vibrations travel out from the source of energy. Mostly, we are talking air, but bear in mind we can hear under water, and through solids (e.g. bone conduction). This energy reaches our ears, and presto, we experience sound.

S, D, DS, SB, R, ER, CD

These letters represent some useful acronyms for concepts that enable us to deal with the propagation of sound.

“S” (source) represents a sound source. It can be any vibrating object (speaker, string, drum, gong) which is transferring energy into the surrounding material, e.g. air, wood, water.

“D” (distance) represents the length of the direct path from the emitting object (e.g. a speaker) to the receiving object (e.g. ears, microphones, sound meter, etc.). In air (the norm for our purpose) sound attenuates as it travels by a factor of 1/D² because the medium (air) absorbs some of the energy, and more so as it disperses three dimensionally, hence less in any one line of direction.

DS (direct sound) is the sound travelling in a straight line, from S to a receiver.

SB (sound beam); for convenience, we can use this term to describe any one single linear path of an emitted sound which is radiating from a sound source. The concept of a sound beam enables us to understand what happens when one such SB (sound beam) reaches a surface of a different medium, say, a wall of concrete or timber.

For hard surfaces, this SB will mostly be reflected (think of how the waves keep rippling in a swimming pool). This reflection is equal and opposite in angle to the incident of arrival.

2. Reflected sound beam

When sound reaches softer or moveable surfaces a proportion of that sound will be absorbed and less will be reflected away. In some reverb units this may be controllable as a “damping” factor.

R (reflections) represents that group of sound beams which reach our ears after they have been bounced off multiple surfaces and travelled multiple pathways longer than that of the DS, hence arriving many milliseconds later than the DS, with a smearing effect.

ER (early reflections) represent a group of R sound beams that arrive earlier than the bulk of the R beams, because they have reflected off only perhaps one or two closer surfaces and travelled a pathway that is not much longer than the DS path.

One further term needs attention. “CD” (critical distance) is an important concept. It is the point at which the sum of all the reflected sounds is equal in level to the direct sound from a sound source. This is important because at distances greater than CD sound signals such as speech deteriorate and become unintelligible.

Unlike light, sound travels relatively slowly. Normally we don’t think about this, but it is important. We can watch cricket on TV, but if we attend the match, we will see the batsman strike the ball and commence to run before we hear that sound of leather on willow. For a rough calculation, we can use 330 meters per second as the speed sound will travel through air (give or take depending on temperature; try using the number 314 (NTP) for a quick calculation).

Some simple sound pathways

So what does all this mean? Our brain is clever at processing auditory signals; clever enough to recognise that a section of a sound signal, even though it arrives many milliseconds later than the D signal, is that same signal, just delayed a bit.

Further, R and ER can tell us much about our surrounds.

Surprisingly, some blind people can “see” in the same way as bats, who “echolocate”, by emitting clicks and listening for the ER and R signals. Mostly, sound reaching a target (ears, meter, mic) is a mix of direct and reflected waves.

Many objects near and far play a part in the totality of sound reaching our ears. Sound environments can be enormously varied; open ground, forest, beside a structure such as building or a cliff, in an alleyway (who hasn’t hollered for an echo in an alleyway?), in an arena, or in a room, big or small, and of course our bedroom studio.

The extremes are special rooms developed for research; the anechoic chamber, and the reverb chamber. Both are enclosed boxes, maybe big enough to fit a modest house. Both are constructed from massively thick walls of concrete, and sit on isolating suspensions.

The anechoic chamber is lined with much totally absorptive material, whilst the reverb chamber has bare concrete walls. In the former people find the sound of speech rather disturbing at first; because there is absolutely no reflected addition to the direct signal, whilst in the latter, it becomes very hard to converse as the sound is swamped with its own reflections which can build up over many seconds.

Let’s take a less extreme case; an observer standing at a distance from a speaker on the main runway at a big airport.

Nearly all the sound is direct (assuming no incoming or outgoing flights and no wind). There is really only one ER, and no R. The ER is from the sound that strikes the concrete runway at the midpoint (between speaker and person). The flat grassed fields to either side will offer only miniscule reflections.

If we are standing at two metres distance and the speaker and observer are two metres tall, the ER will be not quite two milliseconds delayed, but will be about 3 dB quieter. The golden eared people may be able to hear this as an effect, though most will hear the delay as augmenting the sound.

Doing some fancy math it can be shown that as the distance increases, this ER becomes less and less noticeable. Indeed the delay time of the ER will get shorter and the ratio of ER to DS diminish (exponentially).

As D increases,the sound is probably best described as getting thinner, and quieter. Without getting complicated, here we are looking at properties that Andy described as forward in the mix, or back in the mix. In reality, as the sound bounces around in various spaces, SBs are meeting and inter-reacting to undergo many of the processes we might call up in our DAWs.

The shape of the space, adjoining spaces, obstacles, and furnishings all modify the resultant combination of soundwaves reaching ears or devices.

As people discovered many centuries ago, an optimal amount of reverberance added a quality of richness to a musical performance, and consequently, great opera halls were designed and built.

We can start listening closely to live every day sounds (i.e. spend some time without ear buds in). Listen to those sounds close, and those further away, as well as various live musical performances – concerts, clubs, pub bands, buskers etc. Attempt to appreciate how sounds is shaped. For instance, move to a different spot and listen again. Look around to see what may be influencing what you hear.

As our understanding grows, we can look more selectively at our tools in our studios to see what will best enhance the way our recordings will turn out; blending realism and excitement, using the properties of sounds.

From the December 2018 – January 2019 edition of CX Magazine. CX Magazine is Australia and New Zealand’s only publication dedicated to entertainment technology news and issues – available in print and online. Read all editions for free or search our archive www.cxnetwork.com.au

© CX Media

Subscribe

Published monthly since 1991, our famous AV industry magazine is free for download or pay for print. Subscribers also receive CX News, our free weekly email with the latest industry news and jobs.